- #Airflow dag not updating manual#

- #Airflow dag not updating upgrade#

- #Airflow dag not updating code#

If you wait and then run the dag again it runs the new dag with the operator. If I follow these steps sometimes the dag executes the empty dag without operators (old code) instead of executing new code. Search for all Airflow child ghost processes using ps aux grep. Stop Airflow worker/webserver/scheduler 3.

In the UI, you can see Paused DAGs (in Paused tab). Make sure no Airflow DAGs/Tasks are running 2.

#Airflow dag not updating manual#

#Airflow dag not updating code#

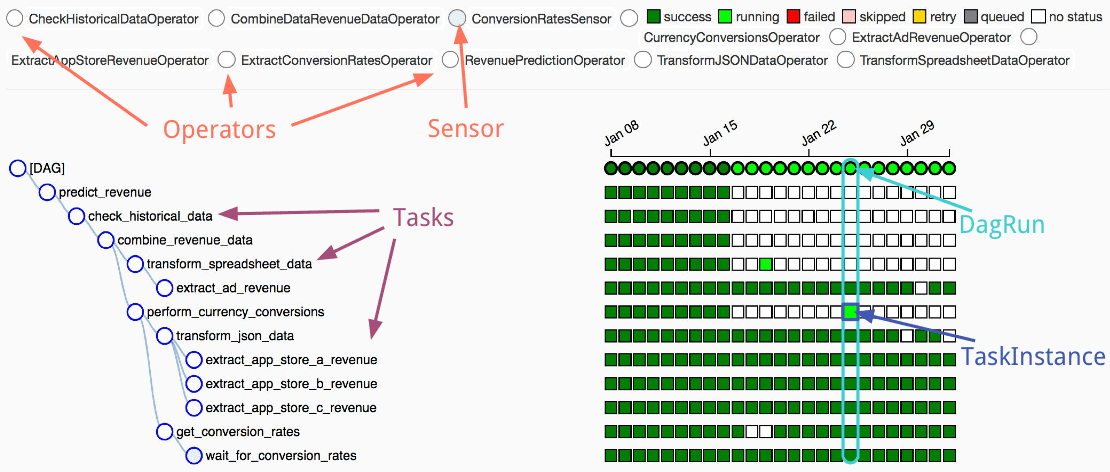

The refresh should load the new content of the file removing it from the cache. Contours show estimates of wildfire smoke near the surface. Any change that breaks a DAG will be visible in the GUI due to the Scheduler, not only when the DAG is triggered, the code of the DAGs are also updated. I expect that if I run a dag after clicking the refresh button, it runs the actual code, without waiting dag_dir_list_interval. This topic describes common issues and errors you may encounter when using Apache Airflow on Amazon Managed Workflows for Apache Airflow (MWAA) and recommended steps to resolve these errors. Sometimes even if I refresh the dag with "Refresh DAG" button in UI, if I run the dag immediately Airflow runs previous dag. 30th it’s running as per the schedule.Kubernetes version (if you are using kubernetes): NA DAG is not picking as per the latest start date, not sure why? When I deleted the old DAG(as it is test DAG I deleted it, in prod I can’t delete it) and created the new DAG with the same name with the latest start date i.e. When I change the schedule of the DAG i.e. As such, creating database connections should be avoided in the DAG file definition (its absolutely fine within the actual task that gets executed just not. Don’t worry, it’s safe to run even if there are no migrations to perform.

#Airflow dag not updating upgrade#

As there is a change in requirement, Now I’m updating the start date to 30th instead of 27(My idea is to start the schedule from 30 and from there every week). Why you need to upgrade Newer Airflow versions can contain database migrations so you must run airflow db upgrade to upgrade your database with the schema changes in the Airflow version you are upgrading to. With DAG("DAG", default_args=default_args, schedule_interval=SCHEDULE_INTERVAL, catchup=True) as dag:Īs per the schedule, it ran on the 27. A new DAG should be recognized from your Airflow instance within a minute, updated DAGs should be recognized within 10 seconds or so. 'start_date': datetime(2020, 12, 20, hour=00, minute=00, second=00) If your Amazon MWAA environment is running Apache Airflow v2.0.2 or older, you will not be able to implement custom UI. SCHEDULE_INTERVAL = timedelta(weeks=1, seconds=00, minutes=00, hours=00) from datetime import datetime, timedeltaįrom _operator import BashOperator Below is what I tried and it’s working as expected. I created a DAG that will run on a weekly basis.

0 kommentar(er)

0 kommentar(er)